I know this is a joke, but I couldn’t be a programmer without some pedantry. LUnix is actually a real OS! I booted it on my Commodore 64 once.

I know this is a joke, but I couldn’t be a programmer without some pedantry. LUnix is actually a real OS! I booted it on my Commodore 64 once.

make up is my build command for pushing to prod

Reminds me of an early Uni project where we had to operate on data in an array of 5 elements, but because “I didn’t teach it to everyone yet” we couldn’t use loops. It was going to be a tedious amount of copy-paste.

I think I got around it by making a function called “not_loop” that applied a functor argument to each element of the array in serial. Professor forgot to ban that.

Sorry, what’s .Net again?

The runtime? You mean .Net, or .Net Core, or .Net Framework? Oh, you mean a web framework in .Net. Was that Asp.Net or AspNetcore?

Remind me why we let the “Can’t call it Windows 9” company design our enterprise language?

Caps lock is great for rebinding to Ctrl

I’ve seen some shops put aside the extra shot if they know another customer has ordered one and they can serve it before it sits around too long. Otherwise, you can dose the portafilter with less coffee for a single.

According to memory alpha wiki:

100 slips = 1 strip

20 strips = 1 bar

There are also bricks, but no known conversation rate exists for that amount.

Ah, the Quark approach:

It’s common in communities where rigid adherence to a set of beliefs is necessary to enforce cohesion. It’s commonly used to avoid engagement with “Facts U Dislike” (haha) by terminating all meaningful discussion.

Part of a flat earth forum and you’re posting an experiment you performed that suggests the earth is round? You’re spreading FUD that should be ignored.

Posting on a crypto shitcoins discord about how this kinda looks like a scam and maybe it’s not a good investment? That’s also FUD. You’re just mad that everyone else is going to be rich.

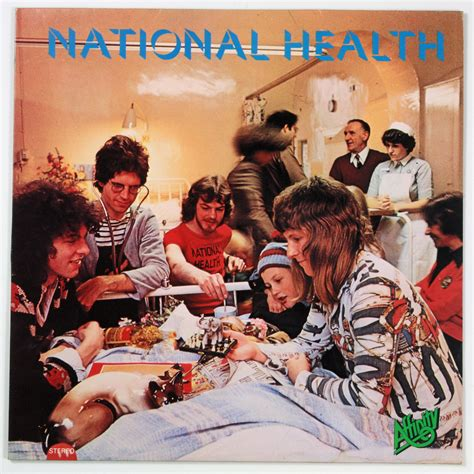

For a moment, I thought this was inner-sleeve album art from National Health

The stop button problem is not yet solved. An AGI would need a the right level of “corrigability”: a willingness allow humans to stop it when undertaking incorrect behavior.

An AGI that’s incorrigible might take steps to prevent itself being shut off, which might include lying to its owners about its own goals/internal state, or taking physical action against an attempt to disable it (assuming it can).

An AGI that’s overly corrigible might end up making an association “It’s good when humans stop me from doing something wrong. I want to maximize goodness. Therefore, the simplest way to achieve a lot of good quickly is to do the wrong thing, tricking humans into turning me off all the time”. Not necessarily harmful, but certainly useless.

I think there are real concerns to be addressed in the realm of AGI alignment. I’ve found Robert Miles’ talks on the subject to be quite fascinating, and as such I’m hesitant to label all of Elizier Yudkowsky’s concerns as crank (Although Roko’s Basilisk is BS of the highest degree, and effective altruism is a reimagined Pascal’s mugging for an atheist/agnostic crowd).

Even while today’s LLMs are toys compared to what a hypothetical AGI could achieve, we already have demonstrable cases where we know that the “AI” does not “desire” the same end goal that we desire the “AI” to achieve. Without more advancement in how to approach AI alignment, the danger of misaligned goals will only grow as (if) we give AI-like systems more control over daily life.

Looks like an annealer in a glass blowing studio.

Jiraffe: another terrible product from Atlassian

EMH was designed to be a doctor, not a janitor. I bet a lot of the work behind EMH was in proving mathematically that it would not harm patients (while at the same time, understanding that some temporary harm might be needed to save a patient’s life). We see how core this is to an EMH’s identity when in Voyager, the doctor goes crazy after not being able to save a crew member.

Meanwhile, Moriarty had no safeguards. That’s part of why he was so dangerous to the crew of the Enterprise.

“There’s a thing I’m unfamiliar with”

Happened at my workplace. An phishing email went out to test how likely people were to click the link.

Anyone who clicked the link had to take phishing training. Anyone who forwarded it to our internal “hey this is a phishing email” service also had to take training… because the internal service would automatically click the link.

Poor guy can’t get any relief from his Kronos’ Disease

Granted, security isn’t much my background, but that algorithm basically sounds like a TOTP, so I’d look into how people protect those secrets. You’d generally use a kind of vault/secrets storage. Also, whatever authentication secret that the API uses should be independent from the password to any user account, such that it can be easily revoked in case of a leak.

http://www.thecodelesscode.com/case/6