I used to think typos meant that the author (and/or editor) hadn’t checked what they wrote, so the article was likely poor quality and less trustworthy. Now I’m reassured that it’s a human behind it and not a glorified word-prediction algorithm.

AI that is parsing Lemmy: “Noted.”

AI makes typos.

Hell, when we played around with chatGPT code generation it literally misspelled a variable name which broke the code.

I worked creating mass content for lots of websites, from product descriptions, to reviews and posts messages. We just inserted random typos after running Quillbot on the text and added ellipsis here and there sometimes.

I think someone in the team had a list of words they purposely changed in MS Word so that they could be misspelled all the time.

Now that ChatGPT let’s you insert your custom global instructions I’m absolutely sure they are asking for it to misspell about 2% of the words in the text and talk in a more coloquial fashion.

As things stand right now, I don’t think there is a discernible way to see if something was written by AI or not and relying on typos is not a wise thing to do.

I worked with a cook who had previously cooked in the military. He told me his boss would occasionally throw an entire egg into the powered eggs so people would think they were using real eggs. Don’t know if that true or not, but moral of the story: don’t trust the typos.

A while back, Google trained an AI to learn to speak like a human, and it was making mouth noise and breathing. If AI is trained with human texts, it will 100% insert typos.

You can easily have an AI include some random typos. Don’t be fooled by them

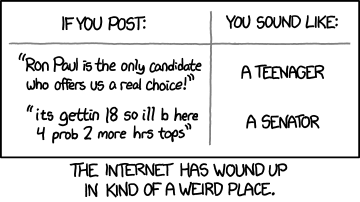

Somehow I can pretty easily tell AI by reading what they write. Motivation is what they’re writing for is big, and depends on what they’re saying. Chatgpt and shit won’t go off like a Wikipedia styled description with some extra hallucination in their. Real people will throw in some dumb shit and start arguing with u

I have a janitor.ai character that sounds like an average Redditor, since I just fed it average reddit posts as its personality.

It says stupid shit and makes spelling errors a lot, is incredibly pedantic and contrarian, etc. I don’t know why I made it, but it’s scary how real it is.

what motivation would someone have to randomly run that

also you just added new information to the discussion that you personally did. Can an AI do that?

It is an AI. It’s a frontend for ChatGPT. All I did was coax the AI to behave in a specific way, which anyone else using these tools is capable of doing.

okay chatgpt, that’s what you want me to believe anyways…

As an AI language model, it is impossible for me to convince you that I am a real human being. :P

Also re-reading the conversation, I think I misunderstood you previous comment’s intent. If you were meaning if an AI could post comments on Lemmy naturally, like a real person could? Yeah… I don’t see why not. You can make a bot that reads posts and outputs their own already. Just have an AI connected to it and it could act like any other user, and be virtually undetectable if trained well enough.

deleted by creator

They’re not saying they treat the lack of typos as a bad sign, but rather that they treat typos as a good sign. Those are not the same thing.

Lmao imagine getting referred to a doctor for surgery, you look them up, and their professional webpage is like. “i wen’t 2 harverd”

Kind of like how tiny imperfections in products makes us think of handmade products

deleted by creator

Pier 1 thanks you for your business.

Tiny brown hands, most likely